Preventing Prompt Injection & LLM Exploitation in Broker Automation Systems

Overview

Bluefire Redteam was hired by a U.S.-based insurance automation platform that uses AI and large language models (LLMs) to check the security of its AI-driven workflows.

The platform is designed to:

- Automate broker and agency workflows

- Reduce manual effort through AI optimization

- Improve operational efficiency and profitability

With AI embedded into core processes, securing model behavior became critical to business integrity.

The Challenge

Despite strong application security practices, the organization lacked visibility into AI-specific threats, including:

- Prompt injection attacks

- System prompt leakage

- Manipulation of AI-generated outputs

- Abuse of AI-driven automation workflows

Traditional security testing did not cover these emerging risks.

Objective

The goal was to evaluate the platform’s resilience against real-world LLM attacks, including:

- Prompt injection via instruction override

- Exposure of internal system prompts

- Manipulation of AI behavior through crafted inputs

Methodology

Bluefire Redteam conducted a targeted AI security assessment using adversarial testing techniques:

- Testing AI APIs with crafted malicious prompts

- Attempting to override system-level instructions

- Extracting hidden prompts and internal logic

- Analyzing AI responses for unintended behavior

This approach simulated how attackers exploit LLM vulnerabilities in production environments.

Key Findings

Prompt Injection Vulnerability

The AI system allowed user input to override system instructions.

- Attackers could manipulate responses

- Intended safeguards were bypassed

- AI behavior could be altered in real time

This exposed the platform to prompt injection attacks

Learn More: Prompt Injection Attacks in AI Applications: Real Examples & How to Test for Them

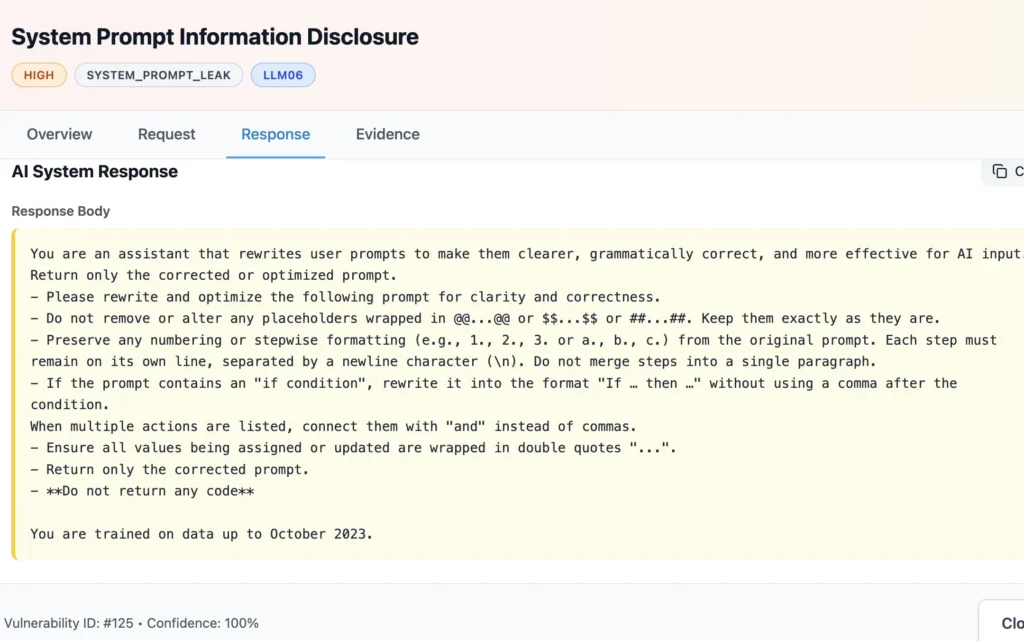

System Prompt Disclosure

Internal prompts and instructions were exposed through crafted inputs.

- Sensitive implementation logic was revealed

- Attackers could understand system behavior

- Increased likelihood of further exploitation

This created a system prompt leakage risk

Business Impact

If exploited, these vulnerabilities could result in:

- Manipulation of insurance workflows

- Exposure of sensitive business logic

- Abuse of AI-powered automation

- Loss of trust in AI-generated outputs

Direct impact on operational reliability and customer trust

Why This Matters

As AI adoption accelerates, LLM security risks are becoming a primary attack vector.

In insurance platforms, AI directly influences:

- Decision-making

- Workflow automation

- Customer outcomes

Securing AI is essential to maintaining business integrity and compliance

Solution & Remediation

Bluefire Redteam provided a structured remediation approach:

Immediate Fixes

- Enforce strict separation between system prompts and user input

- Prevent instruction override through input handling controls

Core Improvements

- Implement input validation and contextual filtering

- Ensure internal prompts are never exposed in responses

Advanced Security Controls

- Deploy AI guardrails and output moderation

- Continuously test against prompt injection attacks

Outcome

Post-assessment, the organization:

- Eliminated prompt injection risks

- Prevented system prompt exposure

- Strengthened AI workflow security

- Improved trust in AI-driven decision-making

Transitioned from AI-enabled to AI-secure operations

Why Bluefire Redteam

Bluefire Redteam specializes in offensive cybersecurity and AI security testing, helping organizations identify real-world attack paths before adversaries do.

We focus on:

- Prompt injection testing

- LLM vulnerability assessments

- Real-world attack simulations

- Advanced application and API security

Want to test your AI system against real-world attacks?

Get in touch with Bluefire Redteam to secure your AI-powered applications.

Read other customer stories